Three-D Issue 28: Combatting fake news: analysis of submissions to the fake news inquiry

In January 2017, the UK Parliament’s Culture, Media and Sport Committee set up its Fake News Inquiry to investigate ‘the growing phenomenon of widespread dissemination, through social media and the internet, and acceptance as fact of stories of uncertain provenance or accuracy’. It was a response to the public outcry over deception in political campaigning witnessed in the UK’s EU Referendum and the USA’s presidential election in 2016.

For instance, the Leave Campaign claimed that leaving the EU would return £350m a week to the UK’s National Health Service, a claim that was rapidly dropped by Leave, on winning. In the US election, populist, pro-Trump fake news stories – many 100% made up – spread across Facebook, generating more engagement than legacy news stories. These were fuelled by computer science students in Veles, Macedonia who, in a bid to make money rather than spread propaganda, launched multiple US politics websites carrying fake news stories. However, there were also plenty of far right pro-Trump outlets, from which the Macedonian teenagers plagiarized, optimizing their content to maximise user engagement on social media. Fears were also expressed that Russia had meddled in the US election, as the Democratic National Committees’ emails were hacked and leaked on Wikileaks, prompting Federal Bureau of Investigation probes that enabled the Trump campaign to propagate its claim of ‘crooked Hillary’.

Across 18-19 April 2017, the UK Parliament published the 78 written submissions to its Fake News Inquiry. The significance of the Inquiry goes beyond the UK. This was made clear on our recent trip to SXSW 2017 Interactive, the influential annual technology, journalism and marketing conference in Austin (Texas, USA). This featured many presentations on what should be done about fake news, but was light on regulatory proposals, with multiple actors keenly awaiting the Fake News Inquiry.

We analysed, clustered and assessed the Fake News Inquiry’s 78 written submissions. Unsurprisingly, there is a wide range of definitions of fake news in use, including misinformation (inadvertent online sharing of false information), disinformation (deliberate creation and sharing of information known to be false), propaganda and satire. Most agree that to be considered as fake, the news does not have to be 100% false but may simply incorporate deliberately misleading elements within its content or context.

Solutions suggested

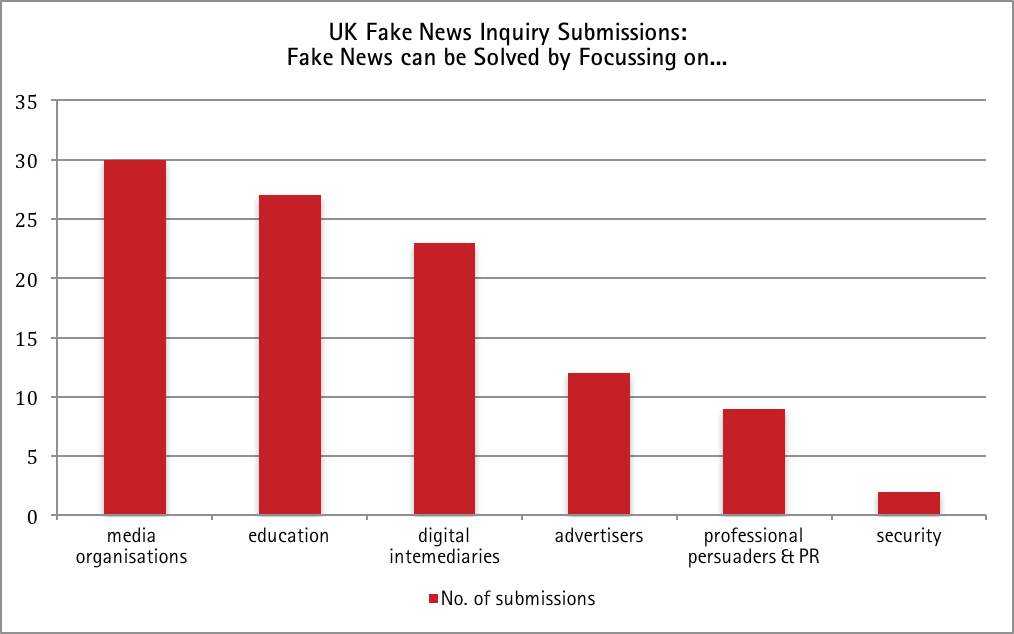

Submissions to the Fake News Inquiry recognise the complexity of the fake news phenomenon, with the majority demanding government regulation or self-regulation across six constituent elements (visualised in Figure1), which we will assess in turn. Solutions to fake news variously advocate focusing on:

Submissions to the Fake News Inquiry recognise the complexity of the fake news phenomenon, with the majority demanding government regulation or self-regulation across six constituent elements (visualised in Figure1), which we will assess in turn. Solutions to fake news variously advocate focusing on:

Media organisations: to promote a healthy, pluralistic media economy to maintain and improve accuracy and fact-checking; and to encourage journalists to be more transparent about their sources and to tell the truth (30 submissions);

Education: to increase people’s media and digital literacy (27 submissions);

Digital intermediaries: to divert digital advertising funds to support news outlets; to identify and ban fake news web sites; to be transparent and responsible regarding how they treat news; and to be made liable for hosting provenly false, damaging stories (23 submissions);

Advertising: to assess the impact on the media landscape of the Google–Facebook duopoly of the digital advertising market; to assess whether the Advertising Standards Authority should set standards on how adverts are operated and regulated by digital intermediaries; and to ‘follow the money’ to enable behavioural advertisers to identify fake news sites and avoid their adverts appearing there (12 submissions);

Professional persuaders and PR: to regulate political campaigning to avoid deception (9 submissions);

Security: to give signals intelligence agency GCHQ a leading role in tackling propagandistic fake news instigated by other nations (2 submissions).

Solution 1: Media Organisations

The most frequent solution offered focuses on what media organisations should do. Many argue for legacy news in the UK to be encouraged and supported. Some, for instance, urge for maintenance of already high standards of professional accuracy and fact-checking (e.g. legacy news broadcasters ITN and BBC, professional news body Society of Editors, and multimedia news agency Press Association). The problem with this, however, is that polls consistently show low trust in journalism, especially self-regulated and unregulated outlets. It is not a matter of maintaining trust, then, but actively growing it from very low levels.

A more pro-active solution is to strengthen fact-generation and fact-checking by increasing news resources through: higher staffing levels (National Union of Journalists); employment conditions that promote quality reporting, and collaborative journalism (BBC); start-up ventures to fill the gap in real and trusted local news reporting left by the decline of bigger media (Robert Campbell, University of South Wales); training journalists to handle data (Royal Statistical Society); and using new technology such as an ‘inexpensive cloud-based virtual newsroom’ (Michael Leidig). Ultimately, however, journalism’s ability to consistently fact-check is compromised by journalism’s long-standing financial decline in the digital age, and this is unlikely to be solved any time soon.

Another proffered solution is for journalists to be more transparent about their sources, including think tanks and their sometimes biased research, thereby enabling news consumers to better judge news veracity and bias (Martin Moore (King’s College London), Tobacco Control Research Group (University of Bath)). However, this raises problems where opacity of sources is needed to bring truth to light (for instance, to encourage whistle-blowing). A related solution proposed by Prof David Miller (University of Bath) and his colleagues is a typology of deception to enable journalists to better recognise (and avoid) propaganda: in this way, sources remain protected but it does assume that all journalists would ethically strive to avoid deception. Unfortunately, commercial pressures mitigate against this ethical impulse, as the Leveson Inquiry attests.

Recognising the blind spots and failure of self-regulation, journalists and academics propose regulation to promote a healthy, pluralistic media economy For instance, the National Union of Journalists advocates a raft of regulatory measures including: treating established titles as community assets; preventing further concentration of media ownership; and establishing funding arrangements to ensure the BBC’s future. Beyond media pluralism, others focus on the need for regulation to encourage journalists to tell the truth. Muslim Council of Britain recommends a more effective press regulatory structure (IPSO) regarding the Editor’s Code, appropriate deterrents, taking action and independence. IMPRESS (The Independent Monitor for the Press) argues for completion of the implementation of the post-Leveson framework for press regulation. Regarding financial news, where fake news can cause instant financial damage, several bodies propose that government should regulate financial news and enforce a system of fast redress (Gavin Devine CEO Newgate Communications, The Campaign for Responsible Financial Journalism). However, regulating media organisations to promote pluralism and to encourage journalists to tell the truth are hardly new demands: that they have not yet been realized does not bode well for any further such demands emanating from the Fake News Inquiry.

Solution 2: Education

MeCCSA readers might be heartened that a popular solution suggested was greater education across schools and the general public: specifically, media and digital literacy initiatives to foster critical thinking about the democratic process, the digital environment and news. This solution was proffered by a wide range of bodies including legacy news (ITV), journalists (National Union of Journalists, News Media Alliance), the UK’s professional body representing PR, communications, public affairs, and lobbying practitioners (Public Relations and Communications Association), charities (Research Libraries UK, The Royal Statistical Society, Wikimedia UK), teenagers and academics. For most, this requires a regulatory approach to influence national curricula and run national literacy campaigns. Such regulatory support (legislation, policies, funding) and implementation would undoubtedly be beneficial but it is a long-term solution, and certainly no quick fix.

Solution 3: Digital Intermediaries

The third most popular solution is to focus on the digital intermediaries – the social media and search engine companies that enable fake news to be widely shared: two submissions are from Google and Facebook themselves. One solution proffered by these companies is for digital intermediaries to enable fact-checking/verification, flagging, downgrading or blocking of fake content. Other solutions that Google and Facebook are enacting include: reducing the ability to spoof domains, thereby minimising the prevalence of sites pretending to be real publications (Facebook); and restricting the flow of money to deliberately misleading content (Google). They also both support developing quality journalistic content online via Google News Lab’s Digital News Initiative (Google); and via Facebook’s Journalism Project to strengthen its relationship with publishers through outreach and measures to recognise and reward real news (Facebook).

While Facebook and Google have taken some steps to address fake news, News Media Alliance (the voice of national, regional and local news media organisations in the UK) describes their efforts as ‘underwhelming … public relations’. Instead, journalists and academics alike call for more fundamental change to affect the digital intermediaries’ bottom line. This includes calls for transparency around algorithms that prioritise news stories on social media platforms, to ensure that viral fake content is not presented as news (ITN, News Media Alliance). However, these algorithms are proprietary and lucrative, and hence unlikely ever to be revealed by the digital intermediaries.

Given that digital intermediaries are unlikely to compromise their lucrative business models, some propose regulation of digital intermediaries. On the financial side, suggestions include surcharging internet service providers to create a local news fund from which might be bred hyper-local news providers (National Union of Journalists). Other regulatory suggestions include: taking steps to ensure that digital intermediaries are transparent and responsible in discharging their duties, especially in relation to news (National Union of Journalists, IMPRESS (The Independent Monitor for the Press)); and that search engines should be liable if they continue hosting damaging stories that are manifestly and provenly false (The Campaign for Responsible Financial Journalism). However, such regulation of footloose global companies runs into two principle problems: 1) their transnational power means they are difficult to regulate (especially for a region as small as the UK); 2) they are keen not to be seen as media companies (rather than technology companies) because this invites extra levels of accountability regarding the content they carry.

Solution 4: Advertisers

A smaller number of submissions proffer advertiser-focused solutions. This includes digital intermediaries self-policing their behavioural and programmatic advertising networks, and identifying and cutting off advertisers that support fake news sites (ITN, Press Association, Public Relations and Communications Association, Google, Internet Advertising Bureau, , Prof Vian Bakir and Andrew McStay (Bangor University)). Proponents of self-regulation point out that the ad industry has a self-interest in implementing these solutions, to ensure brand safety (Home Marketing Limited, Prof Vian Bakir and Andrew McStay (Bangor University)).

Addressing a different aspect of the fake news problem – namely, the decline of quality news – several news organisations propose regulatory-enforced advertising-focused solutions to help finance struggling legacy news outlets. For instance, Guardian News & Media propose examining the impact of Google and Facebook’s dominance of digital advertising on future investment in high quality news. News Media Association proposes a raft of regulatory measures to assess the impact on the media landscape of the Google–Facebook duopoly of the digital advertising market. Charity Full Fact asks whether the Advertising Standards Authority has a role in setting standards about how adverts are operated and regulated by Twitter, Facebook, and Google. Certainly, given that legacy news as a whole struggles to make a profit in the current digital news environment, and is dependent on Facebook and Google for news referrals, a national, or still better, supra-national, regulatory approach would be stronger than individual news organisations brokering their own deals. However, achieving supra-national consensus across countries with varying degrees of commitment to supporting independent, high quality news, would be challenging: and it will be instructive to see if the UK government will go it alone in this globally interconnected area.

Solution 5: Professional Persuaders and PR

Nine submissions focus on the professional persuaders and PR. Problematising the culture of deceit, Committee on Standards in Public Life notes that the public wants those in public life to be honest and tell the truth. Prof David Miller (University of Bath) and his colleagues argue for the PR industry to be less deceptive; and Society of Editors notes that politicians’ use of social media ‘removes the checks and balances that the traditional media would normally exercise’.

Recognising that professional persuaders are unlikely to heed exhortations to avoid deception (polls have long shown that people do not trust politicians to tell the truth), diverse parties urge regulation on political campaigning. These include a QC (K.P.E.Lasok QC), a website on the strategies, appeal and effectiveness of political advertising (politicaladvertising.co.uk), charities (Full Fact, Voice of the Listener), academics and citizens.

Despite a broad constituency arguing for regulation of political campaigning, others protest against any government controls on what it perceives to be the truth (e.g. The Rt. Hon the Lord Blencathra). A number of bodies argue that, to protect freedom of political speech, any new form of censorship should be seen as a proportionate measure of last resort. This point is upheld by a UK press self-regulator (IMPRESS (The Independent Monitor for the Press)), the UK’s PR body (Public Relations and Communications Association), an international charity that aims to empower local media worldwide to give people the news they need (Internews), and academic historians of propaganda, communication and rumour (David Coast (Bath Spa University) and his colleagues).

Yet, what constitutes proportionate censorship given the coming age of automated propaganda has yet to be determined. International affairs think tank analyst, Ben Nimmo (Information Defence, Atlantic Council Digital Forensic Research Lab), points to the problem of large-scale, automated distribution networks that use large numbers of fake accounts to propagate messages. Similarly, Prof Vian Bakir and Andrew McStay (Bangor University) warn of a near-horizon future, where, through sentiment analysis and algo-journalism, the spread of fake news could be intensified through empathically-optimised automated fake news: they urge preventative discussion about ethical ways forward with the emergent empathic media sector, including companies such as IBM, Cambridge Analytica, Crimson Hexagon and Narrative Science. However, the alleged use of analytics companies (such as Cambridge Analytica) in the Leave Campaign during the EU Referendum raises serious doubts as to whether politicians would voluntarily eschew using commercial firms that can deliver potent insights into how to target people’s emotions.

Solution 6: Security

Two submissions see fake news as a cyber-security problem that needs to be addressed by the security sector. For instance, The Royal Statistical Society suggests that where fake news has been propagated by other states as part of an ‘information war’, ‘it is for GCHQ to play a leading role in considering how this should be tackled’. Certainly, Edward Snowden’s leaks showed that even as far back as 2013, GCHQ had numerous tools to influence online material; and the Investigatory Powers Act 2016 acknowledges intelligence agencies’ extensive hacking powers. It is likely, then, that GCHQ already plays a leading role in tackling propagandistic fake news instigated by other nations.

Conclusion

Of these regulatory solutions, those concerning education – i.e. increasing people’s digital and media literacy – is perhaps the least contentious; and those concerning security are likely already being enacted, albeit in secret. The other regulatory measures suggested, however, are unlikely to bear fruit in the near future. Regulating media organisations to promote pluralism and to encourage journalists to tell the truth is not a new demand, especially since the 2012 Leveson Inquiry; that it has not yet been implemented does not bode well for fresh demands from the Fake News Inquiry. Regulating political campaigning runs into human rights problems of freedom of speech, as well as resistance from the journalism and PR industries themselves. Regulating digital intermediaries regarding both their treatment of fake news, and their impact on the digital advertising market, faces issues of government will when dealing with powerful, footloose, global media companies which provide platforms and services that are central to the digital economy and modern life.

As for self-regulation, that enacted by news organisations and professional persuaders/PR has produced low trust levels in both journalism and in politicians – a situation pre-dating the fake news phenomenon: and the digital environment generates commercial pressures that make it harder for news outlets to support quality news. Further calls for self-regulation of journalism and PR industries, then, is unlikely to be effective. Self-regulation of digital intermediaries is also likely to be limited in scope: Facebook and Google have taken some steps to address fake news, but they are unlikely to self-regulate against the interests of their own business models.

For an immediate win, follow the money

To conclude, while the issue of regulating professional persuaders/PR and digital intermediaries is ripe for renewed attention, the complex issues involved include human rights obligations to freedom of speech and trans-national commercial interests – neither of which are likely to be easily resolvable. Whilst increased regulatory efforts concerning media and digital literacy are less contentious and worthy long-term investments, for a more immediate win, it is to digital advertisers’ self-regulation that attention should be paid.

For brand safety reasons, the ad industry has self-interest in policing its behavioural and programmatic advertising networks to identify and cut off advertisers that support fake news sites. Advertisers – even the most disreputable – are unlikely to want their advertising associated with content that, by its very nature (i.e. fake news), cannot be trusted.

Vian Bakir & Andrew McStay

Vian Bakir & Andrew McStay